Trends

Nov 27, 2025

Australia will require platforms to block under-16s from social media from 10 December 2025. This simple guide explains what’s changing, why it matters, and how platforms and families will be affected. Photo by: Unsplash

From 10 December 2025 Australia will require many social platforms to stop people under 16 from holding accounts.

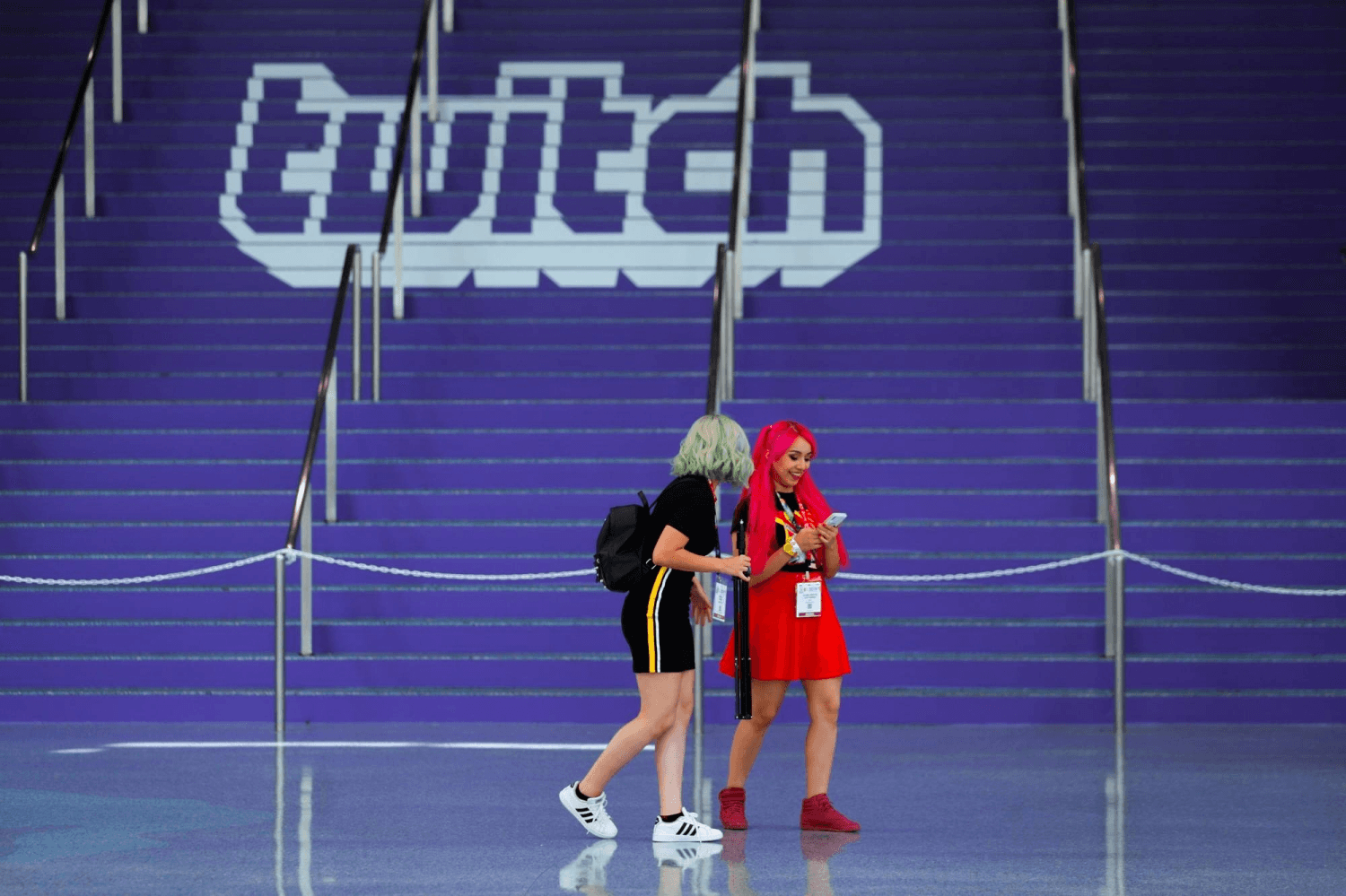

That means platforms such as Instagram, TikTok, X, YouTube, Snapchat and now Twitch must take “reasonable steps” to prevent under-16 users from having active accounts or face big fines. This is part of the government’s social-media minimum-age rules.

Officials say the rule is about protecting young teens from harms linked to social apps: exposure to extreme content, predators, addictive design, and mental-health risks.

The government and the eSafety regulator argue a minimum age gives children more time to mature before using interactive social platforms. They present this as a public-health and safety move for young people.

Twitch a live streaming site popular with gamers was recently named as included because it has interactive features like live chat, gifting, raids that are treated like social media under the law.

That’s why Twitch accounts for under-16s will need to be closed (or blocked from signing up) once the rules kick in.

Platforms were assessed by eSafety and added based on whether their core features meet the law’s definition of “social media.”

The law asks platforms to take “reasonable steps” to prevent under-16 accounts. That can include removing under-16 accounts, blocking new signups by age, or using existing verification tools.

Companies that don’t comply could face civil fines up to about AUD $49.5 million. Some firms (like Meta) have already started notifying under-16 users and giving families time to download or delete data.

For teens under 16 it will mean losing direct access to these platforms in Australia accounts will be deactivated and sign-ups blocked.

Parents will be encouraged to manage alternatives (private messaging, supervised accounts or family devices).

Officials say this is a delay not a criminal ban meaning it’s meant to postpone account ownership until a safer age. The eSafety site also explains appeal and recovery routes if someone is wrongly blocked.

Critics worry the law was rushed and raises privacy and fairness questions. Age verification tools like ID checks or facial-estimation services can be invasive or unreliable, and some say blocking accounts risks isolating vulnerable teens who already rely on online spaces for social support.

Others ask how the rules will work for kids who use platforms for study, fandoms, or jobs (young creators). The debate is now both practical and ethical.

Platforms will have to show regulators the steps they take. Some companies have begun removing under-16 users ahead of the deadline; others are building verification flows or sending warnings.

The eSafety Commissioner has published guidance explaining what “reasonable steps” means and offering a framework for enforcement but how smoothly that will run in the real world is still an open question.

This is one of the first nation-wide moves to set a firm minimum age for social accounts other countries are watching closely.

If it works, it might shape how platforms and lawmakers balance the child safety, privacy, and digital inclusion elsewhere.

If it struggles, it could show the limits of technical age checks and highlight the need for different tools to protect the young people.

If you have children or teens in Australia, check the official eSafety guidance, back up any data now if needed, and talk with your child about safer online habits.

Platforms are likely to announce step-by-step processes soon, and families should look out for notifications from services about account changes.

The goal is to reduce harm, but the path will need careful work from platforms, families, and regulators to get right.