Tech

Nov 23, 2025

A clear look at major companies buying NVIDIA GPUs, why they invest in them, and what it means for their business and finances. Photo by: Network World

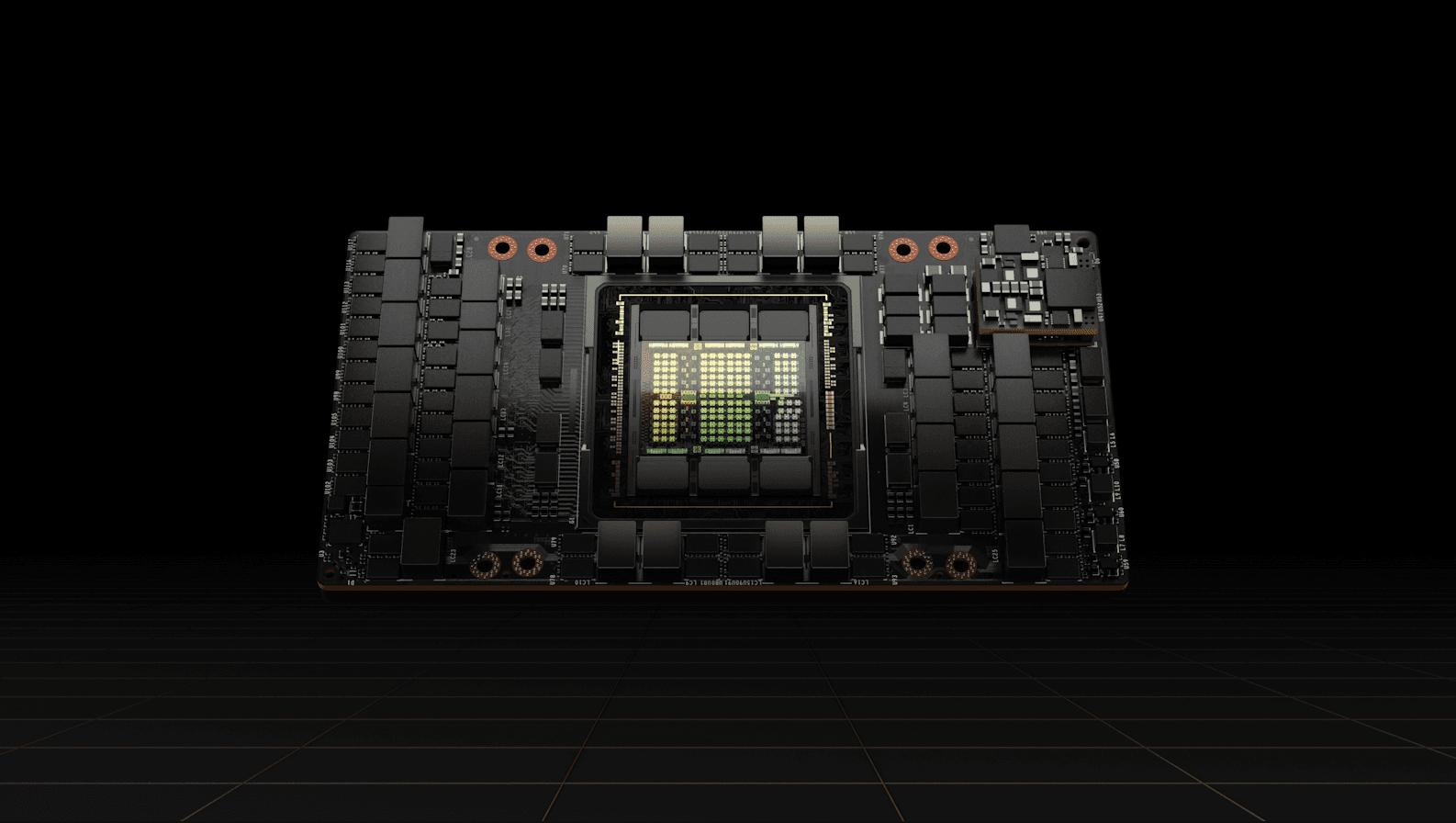

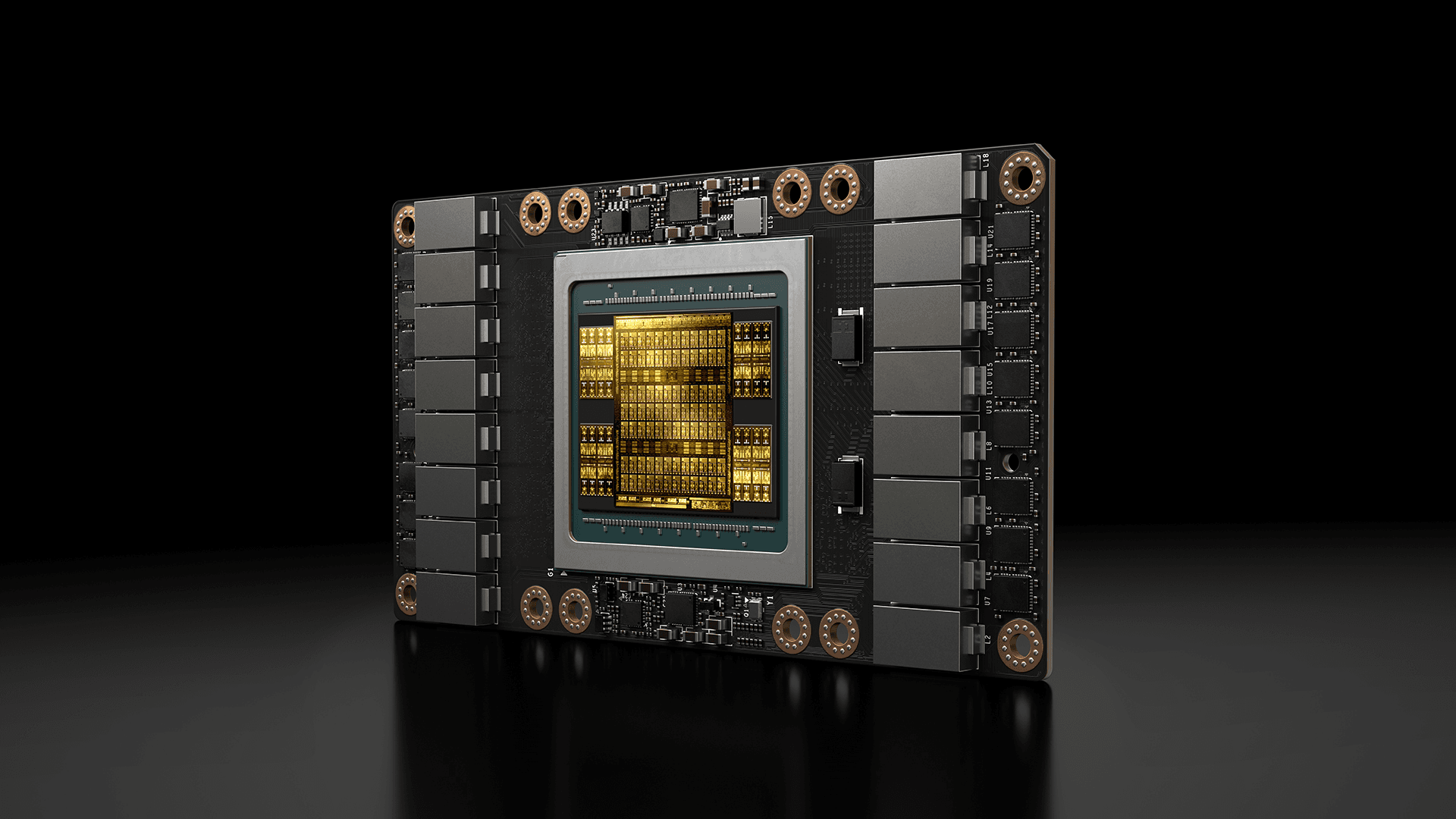

NVIDIA is famously known for making graphics cards, but in recent years its products have moved far beyond gaming. The company now powers artificial intelligence, data-centres, cloud computing and much more.

GPUs which is short for Graphics Processing Units are great at doing many calculations in parallel, which makes them useful for tasks like training large language models or processing huge amounts of data.

Because of this, many big companies are buying NVIDIA’s high-end GPUs to support their AI and data infrastructure. According to one source, “NVIDIA’s top buyers are likely to include Alphabet Inc. (Google), Amazon .com, Inc., Meta Platforms, Inc., Microsoft Corporation and Tesla, Inc..”

In short: If a company is serious about building large AI systems, it often ends up buying NVIDIA GPUs.

Let’s look at some major companies and how they are using NVIDIA’s tech.

Microsoft has been reported as a large buyer of NVIDIA GPUs. One article noted it plans to amass some 1.8 million GPUs by end of 2024, much of which are likely NVIDIA chips.

The reason: Microsoft runs cloud services (via Microsoft Azure) and also AI teams (like Copilot) that need massive computing power.

Owning the NVIDIA GPUs helps them train large models, serve many users, and stay competitive in the game.

Financially: Microsoft is a huge company with multiple businesses; by investing in such infrastructure they are putting their pennies on the future of cloud computing and AI.

While exact dollar amounts for NVIDIA GPU spend are not always publicly broken out, the scale is clearly huge.

Meta (formerly Facebook) is another top buyer. It reportedly purchased around 350,000 of the NVIDIA H100 GPUs in one period to train its large language model “Llama 3”.

Meta uses these GPUs for its research, social media operations, and for deploying AI-driven features. Again, the spend is substantial and signals how important NVIDIA’s GPUs are for companies building AI past just server racks.

Financially: Meta has large revenue from advertising and is now shifting more into AI, virtual reality and metaverse opportunities owning the right hardware helps with these strategies.

Amazon uses NVIDIA GPUs in several ways: through their cloud arm (Amazon Web Services – AWS) which offers GPU-powered instances to other companies, but also for internal AI initiatives. One business-insider report says Amazon’s retail division forecasted a $1 billion AI investment for 2025 tied to GPU infrastructure.

They launched something called “Project Greenland” to optimize GPU usage internally again emphasising how important GPU supply (i.e., NVIDIA’s) is to their operations.

Financially: Amazon is large and diversified; GPU investment supports growth in high-margin cloud services and enhances their competitive edge.

Tesla uses NVIDIA GPUs for various advanced computing needs which includes autonomous driving, simulation and possibly training AI models. Articles note that Tesla bought around 15,000 NVIDIA AI chips in 2023.

Tesla’s business spans electric vehicles, energy, autonomous systems, and so investing in hardware like NVIDIA’s helps them build the “software plus hardware” future.

Financially: Tesla has had high growth but also high costs and risk; the GPU investment is a bet on their future tech leadership.

Another interesting case: Hewlett Packard Enterprise (HPE) announced a joint offering “NVIDIA AI Computing by HPE” which integrates NVIDIA GPUs, networking and software for enterprise AI workloads.

This shows that even infrastructure companies (not just tech giants) rely on NVIDIA’s GPUs to build solutions for enterprises.

Financially: HPE is less high-flying than the big tech firms, but this move helps them participate in the AI hardware stack and services growth.

Google uses GPUs as an essential part of its cloud and research while also heavily depending on its own TPUs.

GPUs from NVIDIA remain important because they are versatile and supported by many AI tools which are useful for customers who want GPU instances.

Alphabet also invests in both the proprietary chips and third-party GPUs to balance cost, speed and ecosystem support.

Financial angle: Google’s cloud revenue grows as it offers GPU instances to enterprise customers.

OpenAI historically trained large models on NVIDIA GPUs provided via partners; recently it has also started renting Google TPUs to supplement capacity.

GPUs remain core to much training and inference work while vendor mix can shift for cost or scale reasons.

Financial/operational note: compute costs are a major line item for AI labs; switching hardware can be a cost control lever.

Baidu uses GPUs to train large language models and to power Baidu Cloud AI services. GPUs help Baidu deliver search-plus-AI features and compete in China’s enterprise AI market.

Financial note: GPU spending supports product differentiation in a competitive ad and cloud market.

Alibaba Cloud provides GPU instances for customers and uses GPUs internally for AI and e-commerce tools. This helps Alibaba monetize AI demand in China and across Asia.

Financial angle: Cloud segment margins improve when enterprise customers pay for GPU-grade compute.

Tencent uses NVIDIA GPUs in its cloud products, for game streaming, AI research and product features across WeChat and games.

GPUs help Tencent support heavy graphics and AI workloads for millions of users.

Financial note: GPU services tie into Tencent’s platform revenue streams (games, ads, cloud).

IBM uses NVIDIA GPUs in its hybrid cloud and in its high-performance computing (HPC) stacks to serve their enterprise clients.

Partnerships and prebuilt systems (hardware+software) using NVIDIA parts help IBM sell enterprise AI solutions.

Financial angle: IBM bundles software and services with GPU hardware to earn its recurring revenue.

Oracle offers GPU instances in Oracle Cloud Infrastructure and uses GPUs to power enterprise AI offerings. Oracle pitches GPU stacks to existing enterprise customers who want on-prem or cloud AI compute.

Financial note: Oracle leverages long-term contracts and enterprise relationships to monetize GPU workloads.Dell Technologies: Servers with NVIDIA accelerators

GPUs into PowerEdge servers and AI racks sold to businesses. This gives Dell sales in both hardware and accompanying services, with GPUs forming the high-value component of AI systems.

Financial note: Server sales plus long-term support contracts are key to Dell’s profitability on AI deals.

Lenovo includes NVIDIA GPUs in its data center and edge systems for customers that need AI compute. Their approach targets enterprises that want integrated hardware solutions backed by Lenovo support.

Financial angle: Adds margins on hardware plus services and expands enterprise footprint.

CoreWeave is a cloud provider focused on GPU compute. It buys large volumes of NVIDIA GPUs to rent to AI companies and studios. Its business model is built entirely around providing NVIDIA-powered instances to customers who need large GPU clusters.

Financial note: High GPU density is capital-intensive but allows premium pricing to specialized users.

Lambda sells the GPU servers and clusters and sometimes uses GPUs as collateral in financing deals. Their core customers are researchers and companies that need on-prem GPU clusters.

Financial/credit note: GPUs can be so valuable that companies sometimes use them to secure loans.

Anthropic rents GPU clusters and also uses TPUs in some deals; GPUs remain part of its compute mix for training Claude. Like other AI labs, GPU choices affect cost and speed for model development.

Financial note: Compute costs are a major expense for model makers and shape fundraising and pricing strategies.

Stability AI uses GPU clusters (cloud and partner hardware) to train image and multimodal models. GPUs let Stability scale training and respond to community demand for model retraining.

Financial note: GPU costs factor into the company’s operating burn and pricing of hosted services.

Cohere uses GPUs to train and serve enterprise language models and offers hosted APIs. GPU capacity is central to Cohere’s product delivery and cost structure.

Financial angle: Their business model sells API access while shouldering GPU compute cost.

Salesforce uses GPU-backed AI infrastructure (in cloud or via partners) to add generative AI features across CRM products.

These investments aim to drive higher subscription prices and retain enterprise customers by offering advanced AI features.

Financial note: Salesforce legalizes AI features via the higher ARPU which is short for average revenue per user.

Why do these GPU purchases matter from a bigger picture standpoint?

Firstly, NVIDIA is benefiting enormously. According to sources, nearly half of NVIDIA’s revenue stems from just four big customers (Microsoft, Meta, Google, Amazon) who all buy its AI-chips.

This concentration means NVIDIA has strong leverage and its business is tightly linked to the AI trend.

Secondly, for the buyer companies these GPU purchases are big investments in hardware, often costing tens or hundreds of millions of dollars.

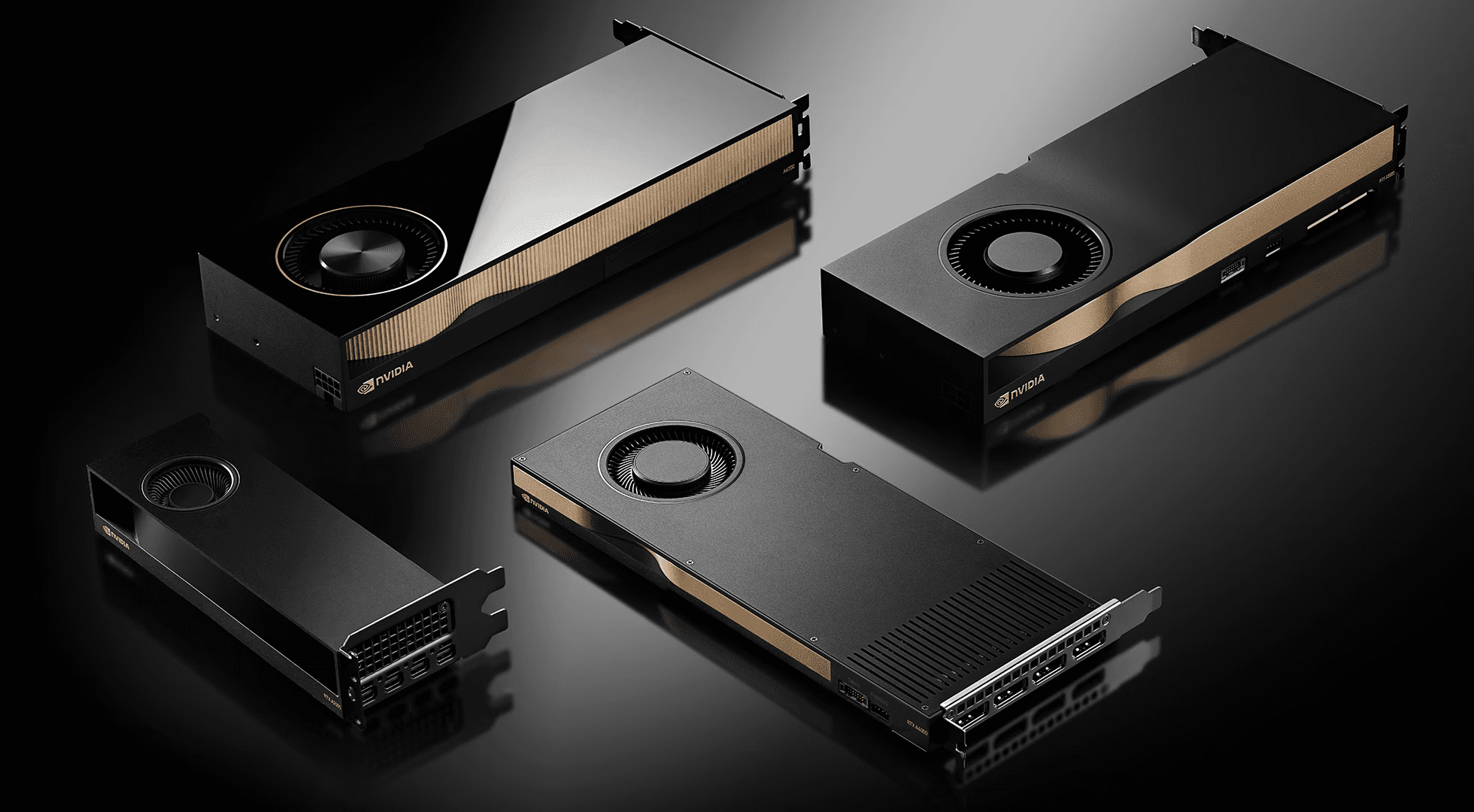

One article mentioned that businesses such as Meta and Tesla have purchased thousands of H100 GPUs to operate huge computing clusters and that each of these GPUs costs between $30,000 and $40,000.

Third, the ROI (return on investment) depends on whether these companies can successfully implement AI systems, develop billable customer-facing AI services, and/or automate to substantially lower operational costs.

If the AI investment pays off, the investment in GPUs will aid the company in growing profits, but if the bet does not pay off, the GPUs will become and expensive liability.

Lastly, the investment in high-grade computing resources and AI capable of GPUs signifies that the fundamental nature of business has shifted.

The absence of powerful computing resources and large data centers and AI and ML (machine learning) modeling will hinder a company's growth and competitiveness.

There are benefits, and therefore, opportunities to pursue, but there are also risks that have to be managed.

One such risk is supply and concentration as the demand of large companies for NVIDIA GPUs will also be less available to smaller companies or will become priced out of the market.

Some companies even use NVIDIA chips as collateral and thus, concentrate the risk that these powerful chips will be used in the systems of the company to cover risks.

There is also the risk of technological change. GPUs are very powerful but new computer architectures or competitors could enter the market like Advanced Micro Devices, Inc. is one such competitor. (AMD) or proprietary hardware may challenge NVIDIA’s dominance.

Also, for the buyer companies, the question is: Will the AI systems built with these GPUs actually yield profits, reductions in cost, or competitive advantage? If not, the hardware spend becomes a sunk cost.

Finally, from an investment (stock) viewpoint: Because NVIDIA is so central, many companies’ growth metrics are tied to how well they use hardware-AI. Investors should watch how effectively GPU investment translates into business outcomes.

In simple terms: NVIDIA’s GPUs are like the engine under the hood of the AI race. Big companies like Microsoft, Meta, Amazon, Tesla and HPE are buying these engines because they believe the future will run on AI and high-performance computing.

For NVIDIA the demand is huge and helps drive its revenue and valuation. For the buyer companies, the bets are large and the payoff depends on how well they can turn hardware into useful services or products.

If you’re tracking this scene, pay attention to how many GPUs a company buys, which models (H100, Blackwell etc.), how much they spend, what business outcomes follow, and how this impacts their financials.

This isn’t just tech talk it ties into how the biggest companies in the world are positioning themselves for the future.

Let me know if you’d like a deeper dive into one specific company (say Meta or Amazon) or an update on latest stock-market moves tied to GPU investments.